The 2021 Olympics is closing in on us, and it is of course very interesting to be looking at how audio is produced and how it is distributed from the Olympics to broadcasters around the world. Not least is it important to us to dig into how we can help ensure that audio quality is maintained in production as well as through transmission lines.

|

Sign up to learn about new blog posts! |

|

|

Practical Life

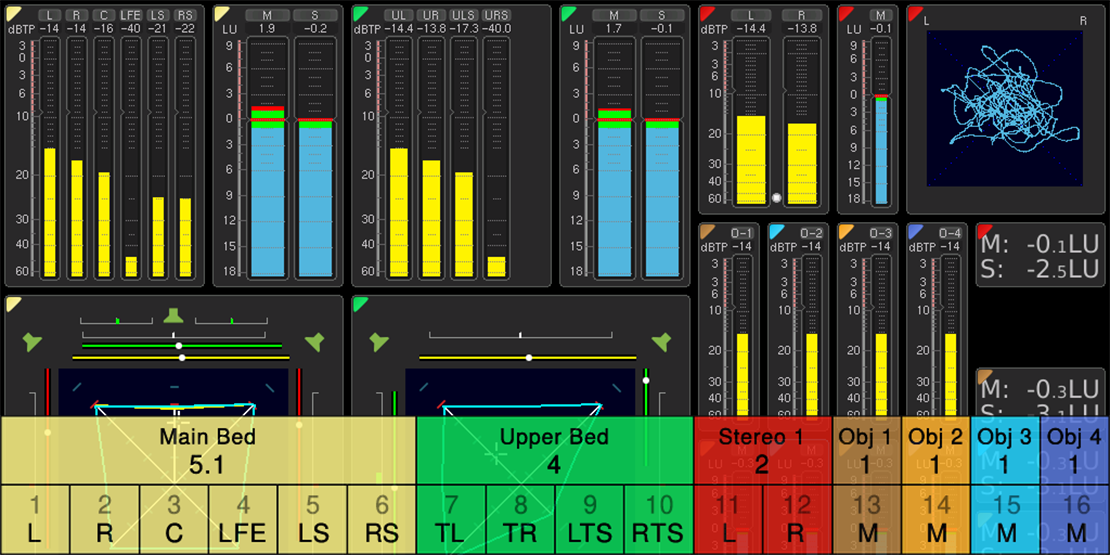

But one thing is the technology, another thing is how it is used in practical life. Through discussions with a number of TV sports channels we have found that 16 channels is the commonly required format for transmission of sports in general, and this is the official format that will be used by OBS (Olympic Broadcasting Services) at the Olympics 2021, too. OBS is the organization responsible for the production of all official audio and video from the Olympics, live television, radio or digital coverage.

All audio at the 2021 Olympics will be produced using 16 audio channels, and the relatively new Dolby® Atmos™ format will lay the ground for how those 16 audio channels are laid out. Though metadata is known to be part of Dolby® Atmos™, there will be no metadata delivered by OBS, as they will be transmitting immersive discrete audio.

Audio Channel Layout

Though all audio will be produced in an immersive audio format, broadcasters can subscribe to channel layouts from stereo all the way up to 5.1.4 with additional audio objects, and here I'd like to zoom in on the formats in use.

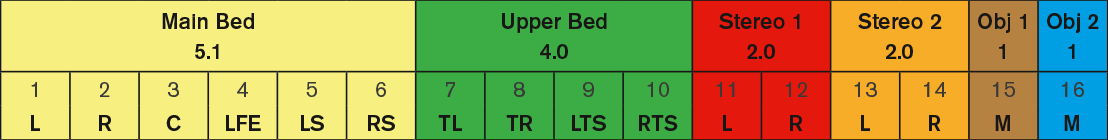

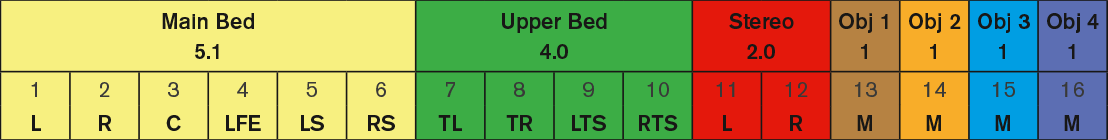

There will be two immersive channel layouts at play. Both are based on a traditional 5.1.4 main- and upper bed layout, but they differ with regards to stereo channels and the number of objects.

The first layout has two stereo pairs plus two audio "objects":

5.1 (main bed) - 4.0 (upper bed) - 2.0 + 2.0 (2 x stereo) - two objects

The other layout has only one stereo pair, but 4 objects:

5.1 (main bed) - 4.0 (upper bed) - 2.0 (stereo) - four objects

Content

The 5.1 main bed is a traditional 5.1 surround layer that contains no metadata. Even without metadata, normal panning can of course occur, though panning is likely to be static.

The upper bed represents 4 ceiling mounted, down pointing speakers. Also here there is no metadata, and most likely static panning.

The 5.1.4 would be containing a traditional mix of the atmosphere, for instance the ambience in a football stadium.

The stereo channel(s) could for example contain the same ambience, but in stereo.

The objects could be as simple as just static international commentary or other static audio. As mentioned before, the transmission from the Olympics will carry no dynamic metadata, though the Dolby® Atmos™ format supports it.

Is there a Loudness standard for this?

There are a number of international standards for measuring stereo and surround audio, and you might wonder if there is also one for immersive audio.

The answer is yes. ITU 1770 in its latest version 4 (ITU-R BS. 1770-4) covers the most popular configurations and weighting factors for measuring audio layout formats up to 22.2.

NHK 22.2

Beside the 16 audio channel immersive audio format discussed above, NHK, the main japanese national broadcaster, will do an additional transmission using 24 audio channels, 22.2.

22.2 is the surround sound component of the new television standard Super Hi-Vision. It has been developed by NHK Science & Technical Research Laboratories and uses 24 speakers, including two subwoofers, arranged in three layers + LFE: Upper layer: 9 channels, middle layer: 10 channels, lower layer: 3 channels and 2 LFE channels.

Super Hi-Vision will be produced from a limited number of venues and for limited distribution.

TouchMonitor und Immersive Audio

With its simple and flexible user interface and the capability to monitor up to 32 audio channels simultaneously, TouchMonitor very much fits the needs to monitor immersive audio streams, and with a selection of form factors, it will prove itself is useful to engineers on production as well as engineers down stream.

In my next blog I will discuss what parts of the immersive audio signal needs to be monitored, how they should be monitored and how to set up a TouchMonitor for that purpose.

Dolby® Atmos™

For those of you who are new to this, Dolby® Atmos™ is the name of a surround sound technology developed by Dolby Laboratories, and the technology supports up to 128 audio channels.

On top of the audio channels themselves, the Dolby® Atmos™ technology allows engineers to add associated spatial audio description metadata to the audio channels.

An audio channel that includes metadata is called an audio object.

MPEG-H

The MPEG-H 3D audio system was developed by Fraunhofer based on the ISO/IEC Moving Picture Experts Group (MPEG) standards to support coding audio as audio channels, audio objects, or higher order ambisonics (HOA).

MPEG-H 3D Audio can support up to 64 loudspeaker channels and 128 codec core channels.

Channels, objects, and HOA components may be used to transmit immersive sound as well as mono, stereo, or surround sound. The MPEG-H 3D Audio decoder renders the bitstream to a number of standard speaker configurations as well as to misplaced speakers.

Binaural rendering of sound for headphone listening is also supported. Objects may be used alone or in combination with channels or HOA components.

The use of audio objects allows for interactivity or personalization of a program by adjusting the gain or position of the objects during rendering in the MPEG-H decoder.

Please note: Due to the Olympics 2020 being postponed to 2021, we have updated the year in this article accordingly.

|

Sign up to learn about new blog posts! |

|

|